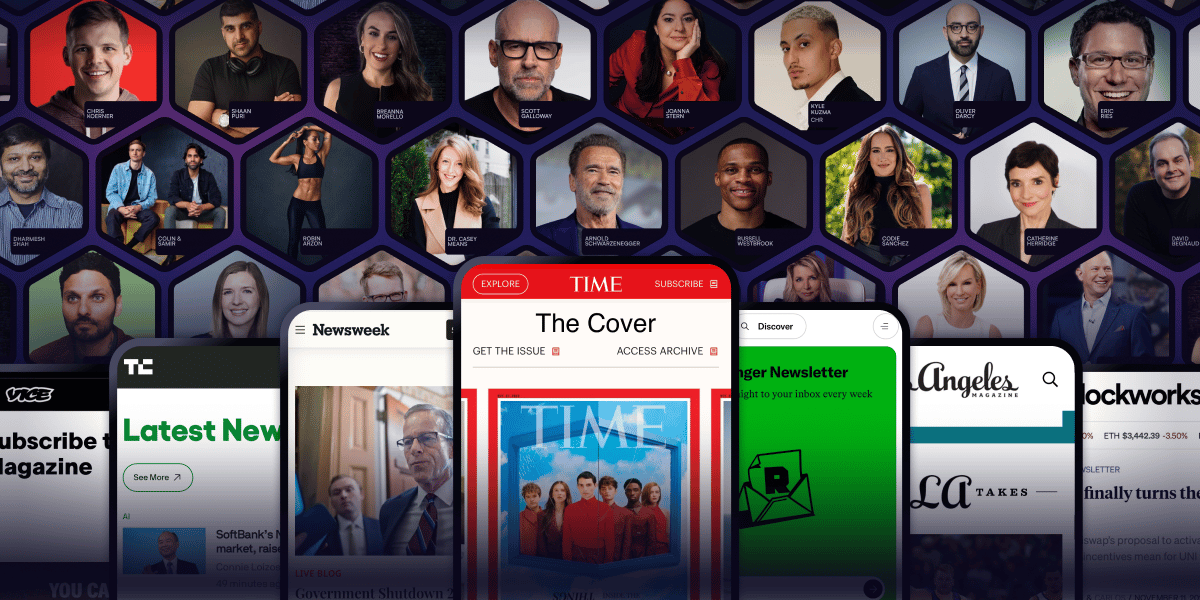

Arnold Schwarzenegger has a newsletter.

Yeah. That Arnold Schwarzenegger.

So do Codie Sanchez, Scott Galloway, Colin & Samir, Shaan Puri, and Jay Shetty. And none of them are doing it for fun. They're doing it because a list you own compounds in ways that social media never will.

beehiiv is where they built it. You can start yours for 30% off your first 3 months with code PLATFORM30. Start building today.

There is a phrase that comes up in almost every founder presentation I have seen.

"On average, our customers..."

And then a number. Average revenue per user. Average order value. Average lifetime value. Average retention. The number sits in the deck, gets quoted in board meetings, and becomes the basis for forecasts and acquisition decisions.

The problem is that the average customer often does not exist. The number is real, in the sense that the math is correct, but it describes nobody. It is the mathematical centre of two or three or four very different groups of customers behaving in very different ways, and the brands that mistake the average for reality end up making decisions based on a fiction.

The way to get behind the average is cohort analysis. And cohort analysis, done properly, almost always reveals that the story you have been telling about your business is materially wrong.

What a cohort actually is

A cohort is a group of customers who share a defining characteristic, most often the time period they were acquired in. The customers who joined in January are one cohort. The customers who joined in February are another. The customers who joined through Meta are a different cohort from those who joined through organic search.

When you look at the behaviour of a single cohort over time, instead of all your customers blended, the numbers start telling a much more honest story. You can see whether new customers are behaving the same way as old ones. You can see whether one acquisition channel produces dramatically better customers than another. You can see whether a product change actually improved retention or just briefly looked like it did.

The most common cohort lies

The most common pattern in cohort analysis is the one that catches almost every growing brand by surprise.

The aggregate retention curve looks stable. Repeat purchase rate, month over month, holds steady. Average customer lifetime value stays where it has always been. The team congratulates itself on a healthy business.

But underneath that aggregate, something is happening. Each new cohort is performing a little worse than the one before. The customers acquired in 2021 are the best customers the brand has ever had. They have high retention, high LTV, and they refer aggressively. The customers acquired in 2022 are slightly worse. The 2023 cohort is noticeably worse. The 2024 cohort is much worse than the 2023 one.

But because the older cohorts are still active, the aggregate retention curve looks fine. The 2021 cohort is propping up the numbers. The brand keeps acquiring customers, and on the dashboard, things look healthy.

Until the older cohorts naturally start to age out. Then suddenly the aggregate starts dropping, and now there is a panic, because the brand is realising what cohort analysis would have told them years earlier. New customer quality has been declining for a long time. The acquisition was getting less efficient. The product experience for new customers was deteriorating relative to that of early customers. Something fundamental was wrong, and the aggregate hid it.

What cohort analysis can reveal

When you start looking at your business by cohort, several patterns emerge that are almost impossible to see otherwise.

The first is acquisition channel quality differences. Customers acquired through Google search behave very differently from customers acquired through Instagram ads. Their LTV differs. Their repeat behaviour differs. Their referral rates differ. If you only look at aggregate numbers, you will optimise for the average, which means you might be over-investing in a high-volume channel that produces low-quality customers and under-investing in a lower-volume channel that produces excellent ones.

The second is the impact of product changes. If you ship a new onboarding flow in March, the right way to measure its impact is to compare the cohort acquired before March to the cohort acquired after. If retention improved for the post-March cohort, the change worked. The aggregate cannot tell you this because it blends pre- and post-change customers together.

The third is the effect of pricing or offer changes. A discount campaign in November might bring in a high volume of customers, but if you look at that November cohort six months later, you might find that the customers acquired through aggressive discounting churned at much higher rates than full-price customers. The aggregate would say the campaign was successful. The cohort tells you it was a long-term loss.

The fourth is the silent decline. New customer quality often deteriorates slowly as a brand scales acquisition. Each new cohort spends a bit less, churns a bit faster. The aggregate hides this completely. The cohort exposes it months earlier than the eventual reckoning would.

The minimum cohort discipline

You do not need expensive tools or a data team to start doing this. The minimum useful cohort analysis takes about an afternoon to set up.

Pull your customer data. For each customer, capture their acquisition date and their acquisition channel. Then track for each customer how much they have spent, how many times they have ordered, and whether they have made a repeat purchase.

Now group customers by month of acquisition. For each monthly cohort, calculate the percentage who made a second purchase within 30 days, 60 days, 90 days, and 180 days. Plot these numbers month by month for the last 12 to 18 months.

What you see will surprise you. The shape of the retention curve, the differences between months, and the points where things changed will tell you stories that aggregate dashboards never showed.

Then do the same exercise by the acquisition channel. You will find that one channel produces noticeably better customers than the others. Possibly dramatically better. And you will find that channel almost certainly has lower volume than your largest channel, which is why you have not been prioritising it.

What to do with what you find

The most important thing cohort analysis does is force you to ask better questions about your business.

If your newest cohorts are performing worse, why? Is it that you scaled paid acquisition into less qualified audiences? Is it that your product experience has changed in some way that newer customers do not love? Is it that the early customer base was self-selecting and motivated, and the broader market is harder to please?

If one channel is producing dramatically better customers than others, why? What is true about how those customers find you that makes them so much more valuable? Can you invest more there? Can you replicate the dynamics of that channel elsewhere?

If a recent product change improved retention for new cohorts but the aggregate barely moved, you now have proof that your product roadmap is creating real value, even though the headline numbers do not show it yet. That changes how you talk about the business internally and with investors.

The aggregate is comfortable. It always seems to tell a stable story. The cohorts are uncomfortable. They tell you what is actually happening, including the things you would rather not know. But the brands that build long-term value are the ones that learn to operate from cohort truth rather than aggregate comfort.

Open your customer data this week. Build the simplest cohort table you can. The story it tells will be a more accurate picture of your business than any number in your monthly board deck.

See you at the next edition, Arindam