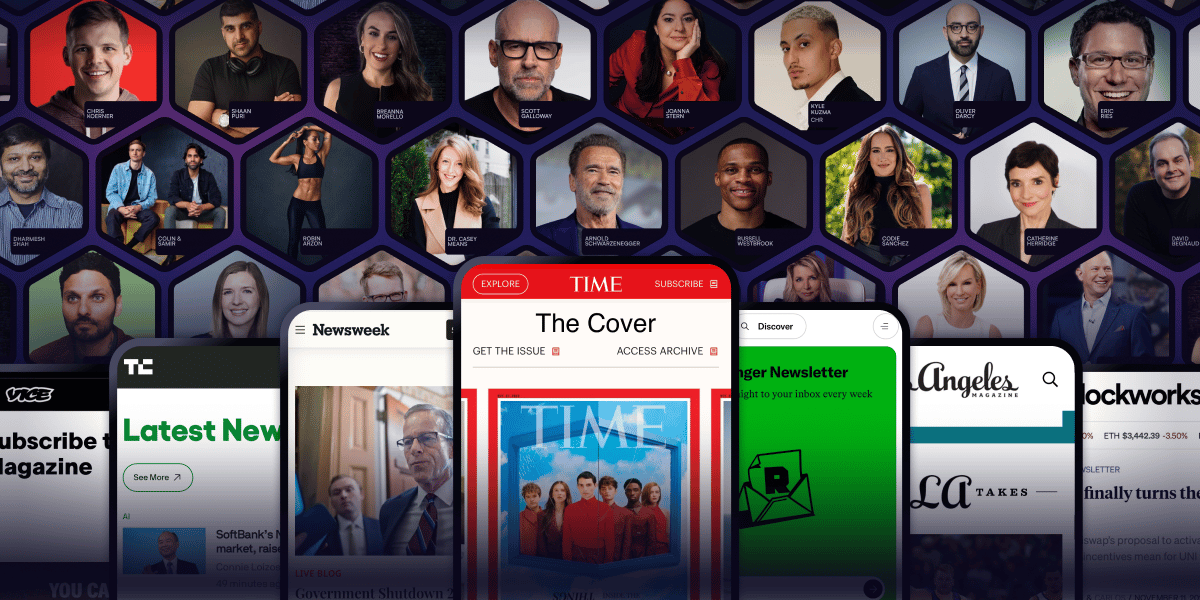

Arnold Schwarzenegger has a newsletter.

Yeah. That Arnold Schwarzenegger.

So do Codie Sanchez, Scott Galloway, Colin & Samir, Shaan Puri, and Jay Shetty. And none of them are doing it for fun. They're doing it because a list you own compounds in ways that social media never will.

beehiiv is where they built it. You can start yours for 30% off your first 3 months with code PLATFORM30. Start building today.

There is a quiet epidemic in growth marketing right now.

Brands are running A/B tests, declaring winners, rolling out changes, and watching their conversion rates not move the way they thought they would. The dashboard says one variant won. The next month's revenue says nothing actually changed.

The reason this keeps happening is uncomfortable. Most A/B tests run by most brands are not statistically valid. The "winners" being declared are not real. And the optimisation cycle that everyone is so committed to is producing motion without progress.

This edition is about what is actually broken in how A/B testing is being practised, and what a useful testing program looks like instead. Not a textbook explanation. The version someone running tests for a living needs to hear.

The first problem is the sample size

Almost every A/B test calculator on the internet will tell you that you need a sample size of around 5,000 to 15,000 visitors per variant to detect a meaningful change in conversion rate, depending on your baseline.

Most brands are running tests on pages that get 800 visitors a week. They run for two weeks, see one variant outperform the other, and ship the change.

That decision is statistically meaningless. With sample sizes that small, normal random variation in user behaviour will produce winners and losers regardless of whether there is any real difference between the variants. Run the same test ten times, and you will get ten different winners, all looking equally confident.

The brands that are testing well at low traffic levels know this. They either test changes large enough that even small samples will detect them, or they accept that most pages on their site are not testable through A/B and need a different optimisation approach entirely.

The second problem is what is being tested

Walk into most growth teams and ask what they tested last quarter. The list will sound something like this. Button colour. CTA copy. Hero image variation. Headline tweak. Form field reduction.

These are the wrong things to test for almost every brand under 100,000 monthly visitors.

Small changes produce small effects. Detecting a small effect requires huge sample sizes. So a brand with limited traffic running tests on small changes will rarely reach statistical significance, but they will run dozens of tests, "find" winners through pattern noise, and feel like they are optimising.

The tests that actually move metrics for low-traffic brands are big. Completely different page structures. Radically different value propositions. Hero sections that aren't variations of each other but are entirely different stories about what the product is. These produce effect sizes large enough that you can actually see them in the data.

A 25% lift can be detected in a few thousand visitors. A 2% lift cannot, even with hundreds of thousands.

The third problem is the testing culture

Most teams treat A/B testing as a permanent state. There is always a test running. The team's identity becomes tied to running them. The number of tests run per quarter becomes a KPI in itself.

This is backwards. A/B testing is not a strategy. It is a tool for the specific moment when you have a hypothesis worth validating and the traffic to validate it. Running tests for the sake of running them produces a culture where the team is constantly tweaking surface elements and never doing the deeper strategic work that actually moves conversion rates.

The brands that grow conversion meaningfully usually do so through a small number of large structural changes, not a large number of small optimisations. The structural change comes from understanding the customer better. From a fresh insight about what they actually need to see before they convert. Not from testing whether the button should be green or orange.

What to do instead

For brands with serious traffic, run fewer tests but make each one bigger. Test radically different versions of the page rather than minor variations. Accept that 70% of tests will not produce a winner, and that is fine. The 30% that do will produce real, durable improvements.

For brands without serious traffic, stop running A/B tests as your primary optimisation strategy. The traffic isn't there to support it. Replace it with two things instead. First, qualitative research. Five hour-long conversations with real customers will produce insights that 50 small A/B tests will not. Second, structural changes based on those insights. When you understand the customer's real hesitation, you don't need to test whether removing it works. You can ship the change with confidence and watch the metric move.

The brands that obsess over testing every change are usually the ones that don't have a clear thesis about what their customers need. The testing becomes a substitute for thinking.

The brands that grow conversion fast are the ones that thought, made bold changes, and used data to confirm rather than to discover.

The honest question

Look at your last 10 A/B tests. How many had statistically meaningful sample sizes? How many of the "winners" you shipped resulted in measurable revenue changes you could trace back to that test? How many of the changes were so small that even if they did work, the cumulative impact on your business was negligible?

If the honest answer is that your testing program is producing more dashboard activity than business impact, that is the real problem to solve.

Test less. Test bigger. Think more.

See you at the next edition, Arindam